As artificial intelligence (AI) technology accelerates into every aspect of modern life, it has now reached a new frontier: political campaigns. A recent example in New York City’s Queens borough has raised questions about how much AI-generated content is acceptable in local elections—and whether voters should be informed when they see it.

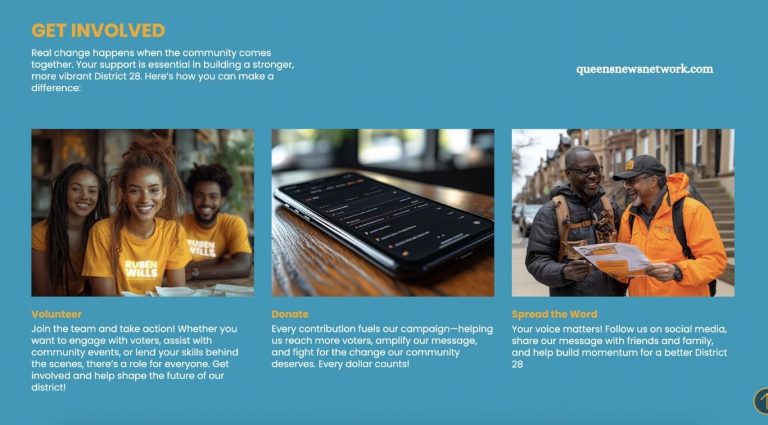

Queens City Council candidate Ruben Wills has found himself at the center of this discussion. His campaign website contains numerous photos that, according to experts and image-detection tools, appear to be artificially generated. These images are not deepfakes or manipulated content of Wills himself. Instead, they portray generic scenes like community gatherings, campaign volunteers, and public spaces—imagery typically used to convey grassroots support and neighborhood engagement.

But there’s one big issue: none of these AI-generated visuals are labeled as such.

The Subtle Digital Shift in Wills’ Campaign

Like most local campaign websites, Wills’ site follows a familiar formula. It features the candidate’s biography, outlines his policy positions, and includes visuals meant to reflect his connection with the community. However, a closer look reveals visual oddities: distorted faces, awkward hand positions, and unrealistic textures—hallmarks of AI-generated images.

One image shows a group of people at a playground, but their facial features appear warped and unnatural. In another, a man wears a backpack with implausible straps and shapes. A third photo displays three smiling individuals wearing “Ruben Wills for City Council District 28” t-shirts, standing near a stack of visibly malformed papers. These subtle visual imperfections are common artifacts in AI image generation, where human features and fine details are often the first to fail under close scrutiny.

Read More: Top 6 Expert Tips for Protecting Your Skin from the Sun

Expert Confirmation: “Definitely AI-Generated”

Dr. Syed Ahmad Chan Bukhari, an associate professor of computer science at St. John’s University who specializes in AI, reviewed the photos at the request of The Eagle. His assessment was clear: the images are likely artificial.

“You can tell an image is AI-generated by closely observing unnatural details like inconsistent lighting, distorted textures, or overly sharp and flawless features that don’t match real-world imperfections,” Dr. Bukhari said.

Despite this, Wills’ campaign offers no disclosure to inform viewers that the images are AI-generated. Repeated requests for comment from Wills went unanswered.

The AI Creators Behind the Campaign

Wills’ campaign website was developed by C&P Creative, a New Jersey-based firm that openly incorporates AI tools into its political and nonprofit marketing strategies. While the firm’s website and YouTube content acknowledge their use of AI in design and development, they also include a disclaimer:

“We are not AI professionals; our content is drawn from years of experience, research, and a passion for how AI can innovate in design, marketing, and development.”

Campaign finance records show that Wills paid C&P Creative $3,000 in May and still owes them an additional $4,000 for design services. These payments comprise nearly 80% of his campaign’s total expenditures at that point.

When The Eagle attempted to contact C&P Creative, there was no response to emails, and the person who answered a listed phone number denied any affiliation with the company.

AI’s Expanding Role in Political Campaigns

Wills’ campaign is not alone in its use of AI. Across the political spectrum, AI-generated media is rapidly becoming a campaign tool.

Former President Donald Trump has used AI images extensively on social media, including humorous depictions such as himself wielding a lightsaber or dancing alongside Elon Musk. Trump’s administration also used AI in formal documents—his health secretary, Robert F. Kennedy Jr., presented a health plan allegedly generated with AI, including fabricated medical citations.

Closer to home, Andrew Cuomo, a former New York governor and current mayoral hopeful, faced scrutiny in April after a housing plan he released included a citation generated by ChatGPT. His campaign insisted the tool was only used for research support.

New Jersey Congressman Josh Gottheimer, currently running for governor, embraced AI openly in a televised campaign ad that featured AI-generated imagery of himself as a boxer. Unlike Wills, Gottheimer’s team disclosed the images were artificially created.

Legal Gray Areas and Loopholes in AI Disclosure

In response to growing concerns, New York passed legislation as part of the FY2025 state budget requiring that any “political communication” involving manipulated or AI-generated media must include a disclosure. The bill, introduced by Queens Assemblymember Clyde Vanel and State Senator Kristen Gonzalez, is one of the first attempts at creating ethical boundaries for AI in politics.

However, the law’s language leaves room for interpretation. It doesn’t differentiate between small-scale stock images and high-impact deepfakes. Nor does it specify how disclosures must be made, leaving enforcement and interpretation largely up to individual campaigns—or to complaints filed with the Board of Elections, which has so far been silent on how it plans to regulate undisclosed AI usage.

The Bigger Picture: Ethics, Intent, and Voter Trust

For advocacy groups and legislators, the real concern is the intent behind the use of AI.

“In politics, we know that we do not want deceptive images,” said Susan Lerner, executive director of Common Cause New York. “We don’t want the public thinking something is true when it’s not.”

Senator Gonzalez echoed that sentiment, emphasizing that while some uses—like generating a simple smartphone graphic—may be harmless, others cross into ethically questionable territory.

“I do not think campaigns should be auto-generating images of volunteers, supporters, voters that they might not have,” Gonzalez said. “Broad support is still a barometer for how a voter might make a decision.”

Gonzalez also noted that voters are often influenced by the appearance of widespread support, and showing artificially created supporters wearing branded campaign merchandise can be misleading, even if unintentionally.

Regulation Can’t Keep Pace With Technology

Despite legislative progress, both Gonzalez and Lerner agree that AI is evolving too rapidly for existing laws to effectively keep up.

“We have not really hit the right level of regulation for AI,” said Lerner. “The public needs more awareness of how pervasive it can be.”

Gonzalez acknowledged that even since the passage of their bill, AI technology has progressed faster than anticipated.

“We are in a new place,” she said. “We’ll need to return to the table and revisit our approach.”

Transparency: The Only Real Safeguard

At its core, the issue isn’t necessarily that campaigns are using AI—it’s that many are doing so without disclosing it, potentially deceiving voters or giving them a false sense of candidate popularity or community engagement.

“So long as you disclose it,” Lerner emphasized, “you’re allowing voters to make an informed decision.”

That’s the heart of the matter. AI is not inherently unethical. But when used in politics—where perception often becomes reality—it must be accompanied by transparency, especially when the images convey trust, support, and legitimacy.

A Glimpse Into the Future of Campaigning

As election seasons grow increasingly digital, candidates across the U.S. are likely to rely more heavily on AI. Whether it’s crafting policy documents, producing social media content, or designing campaign websites, the line between authenticity and fabrication will become harder to discern.

Experts argue that New York’s existing legislation is a good first step, but vigilance and adaptation will be key moving forward.

“Even if you regulate AI today,” said Gonzalez, “by the time it’s implemented, the tech will have already changed.”

Frequently Asked Questions

Who is the Queens council candidate accused of using AI-generated campaign images?

Ruben Wills, a former New York City Councilmember, is the candidate whose campaign website appears to use multiple AI-generated images that depict supporters and local scenes. Experts and image analysis tools have confirmed that many of these visuals are likely artificial.

Are AI-generated images illegal in political campaigns?

No, AI-generated images are not currently illegal. However, New York State law now requires disclosure when AI or manipulated media is used in political communications. The law does not distinguish between harmless use and deceptive intent, but aims to increase transparency for voters.

Did Ruben Wills disclose the use of AI on his campaign website?

As of now, there is no visible disclosure on Ruben Wills’ campaign website indicating that AI-generated images were used. This lack of transparency raises concerns about compliance with state regulations and ethical campaigning standards.

Why does the use of AI in campaign images matter?

AI-generated visuals can mislead voters, especially when they simulate grassroots support, community involvement, or real-life events. Deceptive imagery may influence public opinion or voter trust, even unintentionally, which is why experts and legislators emphasize transparency.

What kind of AI-generated images were used on Wills’ campaign site?

The images include distorted depictions of community events, supporters in branded campaign shirts, and objects with unnatural features. These inconsistencies—like malformed hands, blurred faces, or unreadable text—are common signs of AI-generated content.

Has AI been used in other political campaigns?

Yes. High-profile figures like Donald Trump, Andrew Cuomo, and Josh Gottheimer have used AI in various forms—ranging from social media posts to formal policy documents and campaign ads. Some disclosed the use, while others faced scrutiny for lack of transparency.

What does New York State law say about AI in political ads?

New York law now requires any political communication using AI or manipulated media to include a clear disclosure. The goal is to ensure voters know when they are seeing synthetic or altered content. Enforcement relies on complaints and legal action rather than proactive monitoring.

Conclusion

The growing use of artificial intelligence in political campaigns—like that seen on Queens City Council candidate Ruben Wills’ website—marks a significant shift in how candidates engage with voters. While AI offers tools that are faster, cheaper, and more visually compelling, its use without disclosure raises serious ethical and legal questions.

At its core, this isn’t just a debate about technology—it’s about trust. When voters see images of community support or campaign events, they assume those moments are real. Replacing them with AI-generated simulations especially without clear disclosure—risks misleading the public and undermining the authenticity that campaigns are built on.